The Great Recession (or the Great Hangover) that began in 2008 did not have to happen. Its causes and consequences are not mysterious. Indeed, this particular and very painful episode affirms what the best nonpartisan economists have tried to tell our politicians and policy-makers for decades, namely, that the more they try to inflate and direct the economy, the more damage the rest of us will suffer sooner or later. Hindsight is always 20-20, but in this instance, good old-fashioned common sense would have provided all the foresight needed to avoid the mess we’re in.

In this essay, originally published December 2009, we trace the path of the recession from its origins in the housing market bubble to the policies offered to cure the aftermath.

Download the PDF.

Listen to the audio file (MP3).

Introduction

The theme of “The House that Uncle Sam Built: The Untold Story of the Great Recession of 2008” is that government policy, not a failure of free markets, caused the economic trauma we have been experiencing. We do not live in a free market. We live in a mixed economy. The mixture varies by industry. Technology is primarily free. Financial Services is primarily government. It is not surprising that the most government regulated and controlled segment of the economy, financial services, experienced the biggest problems. These problems were created by actions by the Federal Reserve combined with government housing policy (especially the government- sponsored enterprises – Freddie Mac and Fannie Mae). Misguided government interference in the market is the real culprit in laying the foundation for the Great Recession.

This paper provides a “common sense” and understandable outline of fundamental causes and cures. The analysis is based on long proven economic laws. Despite the wishes and hopes of politicians, economic laws are just as immutable as the laws of physics. If you jump off a ten story building, hitting the ground will not be pleasant. If the Federal Reserve holds interest rates below the natural market rate by rapidly expanding the money supply (“printing” money) as Alan Greenspan did, individuals and businesses will make bad investment decisions and there will be negative consequences to our long term economic well-being. There are no free lunches.

When a doctor misdiagnoses a disease, his treatment will likely make the patient sicker. If we misdiagnose the causes of the Great Recession, our treatment will reduce our long term standard of living. While the U.S. economic system is highly resilient, and we will likely have some form of economic recovery, almost every significant government policy action taken in response to the Great Recession will reduce the quality of life in the long term. Understanding that failed government policies, not market failure, caused our economic challenges is critical to defining the appropriate cures. Since government created the problem, i.e. caused the disaster, it is irrational to believe that more government is the cure. We owe it to ourselves and to our children and grandchildren to take these issues very seriously.

John Allison, Chairman, BB&T

The House That Uncle Sam Built

The man who parties like there is no tomorrow puts his body through an “up” and a “down” course that looks a lot like the business cycle. At the party, the man freely imbibes. He has a great time before stumbling home at 2:00 a.m., where he crashes on the sofa. A few hours later, he awakens in the grip of the dreaded hang- over. He then has a choice to make: get a short-term lift from another drink or sober up. If he chooses the latter and endures a few hours of discomfort, he can recover. In any event, no one would say the hangover is when the harm is done; the harm was done the night before and the hangover is the evidence.

The Great Recession (or the Great Hangover) that began in 2008 did not have to happen. Its causes and consequences are not mysterious. Indeed, this particular and very painful episode affirms what the best nonpartisan economists have tried to tell our politicians and policy-makers for decades, namely, that the more they try to inflate and direct the economy, the more damage the rest of us will suffer sooner or later. Hindsight is always 20-20, but in this instance, good old-fashioned common sense would have provided all the foresight needed to avoid the mess we’re in.

In this essay, we trace the path of the recession from its origins in the housing market bubble to the policies offered to cure the aftermath.

There is no better way to understand a crisis that began in the housing sector than to begin by thinking about a house.

A house must be built on a firm, sustainable foundation. If it’s slapped together with good intentions but lousy materials and workmanship, it will collapse prematurely. If too much lumber and too many bricks are piled on top of a weak support structure, or if housing material is misallocated throughout the house, then an apparently solid structure can crumble like sand once its weaknesses are exposed. Americans built and bought a lot of houses in the past decade not, it turns out, for sound reasons or with solid financing. Why this occurred must be part of any good explanation of the Great Recession.

But isn’t home ownership a great thing, the very essence of the vaunted “American Dream”? In the wealthiest country in the world, shouldn’t everyone be able to own their own home? What could be wrong with any policy that aims to make housing more affordable? Well, we may wish it were not so, but good intentions cannot insulate us from the consequences of bad policies.

Politicians became so enthralled with home ownership and affordable housing – and the points they could score by claiming to be their champions – that they pushed and shoved the economy down an artificial path that invited an inevitable (and painful) correction. Congress created massive, government-sponsored enterprises and then encouraged them to degrade lending standards. Congress bent tax law to favor real estate over other investments. Through its reckless easy money policies, another creation of Congress, the Federal Reserve, flooded the economy with liquidity and drove interest rates down. Each of these policies encouraged too many of the economy’s resources to be drawn into the housing sector. For a substantial part of this decade, our policy-makers in Washington were laying a very poor foundation for economic growth.

Was Free Enterprise the Villain?

Call it free enterprise, capitalism or laissez faire – blaming supposedly unfettered markets for every economic shock has been the monotonous refrain of conventional wisdom for a hundred years. Among those making such claims are politicians who posture as our rescuers, bureaucrats who are needed to implement the rescue plans and special interests who get rescued. Then there are our fellow academics – the ones who add a veneer of respectability – trumpeting the “stimulus” the rest of us get from being rescued.

Rarely does it occur to these folks that government intervention might be the cause of the problem. Yet, we have the Federal Reserve System’s track record, thousands of pages of financial regulations, and thousands more pages of government housing policy that demonstrate the utter absence of “laissez faire” in areas of the economy central to the current recession.

Understanding recessions requires knowing why lots of people make the same kinds of mistakes at the same time. In the last few years, those mistakes were centered in the housing market, as many people overestimated the value of their houses or imagined that their value would continue to rise. Why did everyone believe that at the same time? Did some mysterious hysteria descend upon us out of nowhere? Did people suddenly become irrational? The truth is this: People were reacting to signals produced in the economy. Those signals were erroneous. But it was the signals and not the people themselves that were irrational.

Imagine we see an enormous rise in the number of traffic accidents in a major city. Cars keep colliding at intersections as drivers all seem to make the same sorts of mistakes at once. Is the most likely explanation that drivers have irrationally stopped paying attention to the road, or would we suspect that something might be wrong with the traffic lights? Even with completely rational drivers, malfunctioning traffic signals will lead to lots of accidents and appear to be massive irrationality.

Market prices are much like traffic signals. Interest rates are a key traffic signal. They reconcile some people’s desire to save – delay consumption until a future date – with others’ desire to invest in ideas, materials or equipment that will make them and their businesses more productive. In a market economy, interest rates change as tastes and conditions change. For instance, if people become more interested in future consumption relative to current consumption, they will increase the amount they save. This, in turn, will lower interest rates, allowing other people to borrow more money to invest in their businesses. Greater investment means more sophisticated production processes, which means more goods will be available in the future. In a normally functioning market economy, the process ensures that savings equal investment, and both are consistent with other conditions and with the public’s underlying preferences.

As was made all too obvious in 2008, ours is not a normally functioning market economy. Government has inserted itself into almost every transaction, manipulating and distorting price signals along the way. Few interventions are as momentous as those associated with monetary policy implemented by the Federal Reserve. Money’s essence is that it is a generally accepted medium of exchange, which means that it is half of every act of buying and selling in the economy. Like blood circulating in the body, it touches everything. When the Fed tinkers with the money supply, it affects not just one or two specific markets, like housing policy does, but every single market in the entire economy. The Fed’s powers give it an enormous scope for creating economic chaos.

When central banks like the Federal Reserve inflate, they provide banks with more money to lend, even though the public has not provided any more savings. Banks respond by lowering interest rates to draw in new borrowers. The borrowers see the lower interest rate and believe that it signals that consumers are more interested in delayed consumption relative to immediate consumption. Borrowers then begin to invest in those longer-term projects, which are now relatively more desirable given the lower interest rate. The problem, however, is that the demand for those longer-term projects is not really there. The public is not more interested in future consumption, even though the interest rate signals suggest otherwise. Like our malfunctioning traffic signals, an inflation-distorted interest rate is going to cause lots of “accidents.” Those accidents are the mistaken investments in longer-term production processes.

“I want to roll the dice a little bit more in this situation toward subsidized housing.” – Barney Frank, 2003

Eventually those producers engaged in the longer processes find the cost of acquiring their raw materials to be too high, particularly as it becomes clear that the public’s willingness to defer consumption until the future is not what the interest rate suggested would be forthcoming. These longer-term processes are then abandoned, resulting in falling asset prices (both capital goods and financial assets, such as the stock prices of the relevant companies) and unemployed labor in sectors associated with the capital goods industries.

So begins the bust phase of a monetary policy-induced cycle; as stock prices fall, asset prices “deflate,” overall economic activity slows and unemployment rises. The bust is the economy going through a refitting and reshuffling of capital and labor as it eliminates mistakes made during the boom. The important points here are that the artificial boom is when the mistakes were made, and it is during the bust that those mistakes are corrected.

From 2001 to about 2006, the Federal Reserve pursued the most expansionary monetary policy since at least the 1970s, pushing interest rates far below their natural rate. In January of 2001 the federal funds rate, the major interest rate that the Fed targets, stood at 6.5%. Just 23 months later, after 12 successive cuts, the rate stood at a mere 1.25% – more than 80% below its previous level. It stayed below 2% for two years then the Fed finally began raising rates in June of 2004. The rate was so low during this period that the real Federal Funds rate – the nominal rate minus the rate of inflation – was negative for two and a half years. This meant that, in effect, banks were being paid to borrow money! Rapidly climbing after mid-2004, the rate was back up to the 5% mark by May of 2006, just about the time that housing prices started their collapse. In order to maintain that low Fed Funds rate for that five year period, the Fed had to increase the money supply significantly. One common measure of the money supply grew by 32.5%. A lot of economically irrational investments were made during this time, but it was not because of “irrational exuberance brought on by a laissez-faire economy,” as some suggested. It is unlikely that lots of very similar bad investments are the resut of mass irrationality, just as large traffic accidents are more likely the result of malfunctioning traffic signals than lots of people forgetting how to drive overnight. They resulted from malfunctioning market price signals due to the Fed’s manipulation of money and credit. Poor monetary policy by an agency of government is hardly “laissez faire”.

What About Housing?

With such an expansionary monetary policy, the housing market was sent contradictory and incorrect signals. On one hand, housing and housing-related industries were given a giant green light to expand. It is as if the Fed supplied them with an abundance of lumber, and encouraged them to build their economic house as big as they pleased.

This would have made sense if the increased supply of lumber (capital) had been supported by the public’s desire to increase future consumption relative to immediate consumption – in other words, if the public had truly wanted to save for the bigger house. But the public did not. Interest rates were not low because the public was in the mood to save; they were low because the Fed had made them so by fiat. Worse, Fed policy gave the would-be suppliers of capital – those who might have been tempted to save – a giant red light. With rates so low, they had no incentive to put their money in the bank for others to borrow.

So the economic house was slapped together with what appeared to be an unlimited supply of lumber. It was built higher and higher, drawing resources from the rest of the economy. But it had no foundation. Because the capital did not reflect underlying consumer preferences, there was no support for such a large house. The weaknesses in the foundation were eventually exposed and the 70-story skyscraper, built on a foundation made for a single-family home, began to teeter. It eventually fell in the autumn of 2008.

But why did the Fed’s credit all flow into housing? It is true that easy credit financed a consumer-borrowing binge, a mergers-and-acquisitions binge and an auto binge. But the bulk of the credit went to housing. Why? The answer lies in government’s efforts to increase the affordability of housing.

Government intervention in the housing market dates back to at least the Great Depression. The more recent government initiatives relevant to the current recession began in the Clinton administration. Since then, the federal government has adopted a variety of policies intended to make housing more affordable for lower and middle income groups and various minorities. Among the government actions, those dealing with government-sponsored enterprises active in mortgage markets were central. Fannie Mae (the Federal National Mortgage Association) and Freddie Mac (Federal Home Loan Mortgage Corporation) are the key players here. Neither Fannie nor Freddie are “free-market” firms. They were chartered by the federal government, and although nominally privately owned until the onset of the bust in 2008, they were granted a number of government privileges in addition to carrying an implicit promise of government support should they ever get into trouble.

Fannie and Freddie did not actually originate most of the bad loans that comprised the housing crisis. Loans were made by banks and mortgage companies that knew they could sell those loans in the secondary mortgage market where Fannie and Freddie would buy and repackage them to sell to other investors. Fannie and Freddie also invented a number of the low down-payment and other creative, high-risk types of loans that came into use during the housing boom. The loan originators were willing to offer these kinds of loans because they knew that Fannie and Freddie stood ready to buy them up. With the implicit promise of government support behind them, the risk was being passed on from the originators to the taxpayers. If homeowners defaulted, the buyers of the mortgages would be harmed, not the originators. The presence of Fannie and Freddie in the mortgage market dramatically distorted the incentives for private actors such as the banks.

The Fed’s low interest rates, combined with Fannie and Freddie’s government-sponsored purchases of mortgages, made it highly and artificially profitable to lend to anyone and everyone. The banks and mortgage companies didn’t need to be any greedier than they already were. When banks saw that Fannie and Freddie were willing to buy virtually any loan made to under-qualified borrowers, they made a lot more of them. Greed is no more to blame for these bad mortgages than gravity is to blame for plane crashes. Gravity is always present, just like greed. Only the Federal Reserve’s easy money policy and Congress’ housing policy can explain why the bubble happened when it did, where it did.

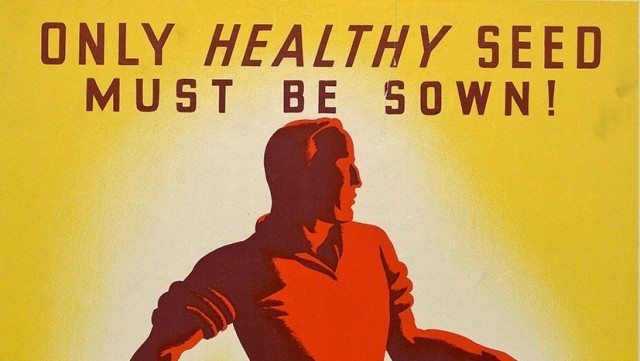

Of further significance is the fact that Fannie and Freddie were under great political pressure to keep housing increasingly affordable (while at the same time promoting instruments that depended on the constantly rising price of housing) and to extend opportunities to historically “under-served” groups. Many of the new mortgages with low or even zero-down payments were designed in response to this pressure. Not only were lots of funds available to lend, and not only was government implicitly subsidizing the purchase of mortgages, but it was also encouraging lenders to find more borrowers who previously were thought unable to afford a mortgage.

Partnerships among Fannie and Freddie, mortgage companies, community action groups and legislators combined to make mortgages available to many people who should never have had them, based on their income and assets. Throw in the effects of the Community Reinvestment Act, which required lenders to serve under-served groups, and zoning and land-use laws that pushed housing into limited space in the suburbs and exurbs (driving up prices in the process) and you have the ingredients of a credit-fueled and regulatory-directed housing boom and bust.

All told, huge amounts of wealth and capital poured into producing houses as a result of these political machinations. The Case-Shiller Index clearly shows unprecedented increases in home prices prior to the bust in 2008. From 1946-1996, there had been no significant growth in the price of residential real estate. In contrast, the decade that followed saw skyrocketing prices.

It’s worth noting that even tax policy has been biased toward fostering investments in housing. Real estate investments are taxed at a much lower rate than other investments. Changes in the 1990s made it possible for families to pocket any capital gains (income from price appreciation) on their primary residences up to $500,000 every two years. That translates into an effective rate of 0% versus the ordinary income tax rates that apply to capital gains on other forms of investment. The differential tax treatment of capital gains made housing a relatively better investment than the alternatives. Although tax cuts are desirable for promoting economic growth, when politicians tinker with the tax code to favor the sorts of investments they think people should make, we should not be surprised if market distortions result.

Former Fed chair Alan Greenspan had made it clear that the Fed would not stand idly by whenever a crisis threatened to cause a major devaluation of financial assets. Instead, it would respond by providing liquidity to stem the fall. Greenspan declared there was little the Fed could do to prevent asset bubbles but that it could always cushion the fall when those bubbles burst. By 1998, the idea that the Fed would always bail out investors after a burst bubble had become known as the “Greenspan Put.” (A “put” is a financial arrangement where a buyer acquires the right to re-sell the asset at a pre-set price.) Having seen the Fed bailout investors this way in a series of events starting as early as the 1987 stock market crash and extending through 9/11, players in the housing market had every reason to expect that if the value of houses and other instruments they were creating should fall, the Fed would bail them out, too. The Greenspan Put became yet another government “green light,” signaling investors to take risks they might not otherwise take.

As housing prices began to rise, and in some areas rise enormously, investors saw opportunities to create new financial instruments based on those rising housing prices. These instruments constituted the next stage of the boom in this boom-bust cycle, and their eventual failure became the major focus of the bust.

Fancy Financial Instruments – Cause or Symptom?

Banks and other players in the financial markets capitalized on the housing boom to create a variety of new instruments. These new instruments would enrich many but eventually lose their value, bringing down several major companies with them. They were all premised on the belief that housing prices would continue to rise, which would enable people who had taken out the new mortgages to continue to be able to pay.

Mortgages with low or even nonexistent down payments appeared. The ownership stake the borrower had in the house was largely the equity that came from the house increasing in value. With little to no equity at the start, the amount borrowed and therefore the monthly payments were fairly high, meaning that should the house fall in value, the owner could end up owing more on the house than it was worth.

“If it ain’t broke, why do you want to fix it? Have the GSEs ever missed their housing goals?” – Maxine Waters, 2003

The large flow of mortgage payments resulting from the inflation-generated housing bubble was then converted into a variety of new investment vehicles. In the simplest terms, financial institutions such as Fannie and Freddie began to buy up these mortgages from the originating banks or mortgage companies, package them together and sell the flow of payments from that package as a bond-like instrument to other investors. At the time of their nationalization in the fall of 2008, Fannie and Freddie owned or controlled half of the entire mortgage market. Investors could buy so-called “mortgage-backed securities” and earn income ultimately derived from the mortgage payments of the homeowners. The sellers of the securities, of course, took a cut for being the intermediary. They also divided up the securities into “tranches” or levels of risk. The lowest risk tranches paid off first, as they were representative of the less risky of the mortgages backing the security. The high risk ones paid off with the leftover funds, as they reflected the riskier mortgages.

Buyers snapped up these instruments for a variety of reasons. First, as housing prices continued to rise, these securities looked like a steady source of ever-increasing income. The risk was perceived to be low, given the boom in the housing market. Of course that boom was an illusion that eventually revealed itself.

Second, most of these mortgage- backed securities had been rated AAA, the highest rating, by the three ratings agencies: Moody’s, Standard and Poor’s, and Fitch. This led investors to believe these securities were very safe. It has also led many to charge that markets were irrational. How could these securities, which were soon to be revealed as terribly problematic, have been rated so highly? The answer is that those three ratings agencies are a government-created cartel not subject to meaningful competition.

In 1975, the Securities and Exchange Commission decided only the ratings of three “Nationally Recognized Statistical Rating Organizations” would satisfy the ratings requirements of a number of government regulations.Their activities since then have been geared toward satisfying the demands of regulators rather than true competition. If they made an error in their ratings, there was no possibility of a new entrant coming in with a more accurate technique. The result was that many instruments were rated AAA that never should have been, not because markets somehow failed due to greed or irrationality, but because government had cut short the learning process of true market competition.

Third, changes in the international regulations covering the capital ratios of commercial banks made mortgage-backed securities look artificially attractive as investment vehicles for many banks. Specifically, the Basel accord of 1988 stipulated that if banks held securities issued by government-sponsored entities, they could hold less capital than if they held other securities, including the very mortgages they might originate. Banks could originate a mortgage and then sell it to Fannie Mae. Fannie would then package it with other mortgages into a mortgage-backed security. If the very same bank bought that security (which relied on income from the mortgage it originated), it would be required to hold only 40 percent of the capital it would have had to hold if it had just kept the original mortgage.

These rules provided a powerful incentive for banks to originate mortgages they knew Fannie or Freddie would buy and securitize. The mortgages would then be available to buy back as part of a fancier instrument. The regulatory structure’s attempt at traffic signals was a flop. Markets themselves would not have produced such persistently bad signals or such a horrendous outcome. Once these securities became popular investment vehicles for banks and other institutions (thanks mostly to the regulatory interventions that created and sustained them) still other instruments were built on top of them. This is where “credit default swaps” and other even more complex innovations come into the story. Credit default swaps were a form of insurance against the mortgage-backed securities failing to pay out. Such arrangements would normally be a perfectly legitimate form of risk reduction for investors but given the house of cards that the underlying securities rested on, they likely accentuated the false “traffic signals” the system was creating.

“I set an ambitious goal. It’s one that I believe we can achieve. It’s a clear goal, that by the end of this decade we’ll increase the number of minority homeowners by at least 5.5 million families. Some may think that’s a stretch. I don’t think it is. I think it is realistic. I know we’re going to have to work together to achieve it. But when we do, our communities will be stronger and so will our economy. Achieving the goal is going to require some good policies out of Washington. And it’s going to require a strong commitment from those of you involved in the housing industry.” – President George W. Bush, 2002

By 2006, the Federal Reserve saw the housing bubble it had been so instrumental in creating and moved to prick it by reversing monetary policy. Money and credit were constricted and interest rates were dramatically raised. It would be only a matter of time before the bubble burst.

Deregulation, a False Culprit

It is patently incorrect to say that “deregulation” produced the current crisis [See Appendix A]. While it is true that new instruments such as credit default swaps were not subject to a great deal of regulation, this was mostly because they were new. Moreover, their very existence was an unintended consequence of all the other regulations and interventions in the housing and financial markets that had taken place in prior decades. The most notable “deregulation” of financial markets that took place in the 10 years prior to the crash of 2008 was the passing during the Clinton administration of the Gramm-Leach-Bliley Act in 1999, which allowed commercial banks, investment banks and securities firms to merge in whatever manner they wished, eliminating regulations dating from the New Deal era that prevented such activity. The effects of this Act on the housing bubble itself were minimal. Yet, its passage turned out to be helpful, not harmful, during the 2008 crisis because failing investment banks were able to merge with commercial banks and avoid bankruptcy.

The housing bubble ultimately had to come to an end, and with it came the collapse of the instruments built on top of it. Inflation-financed booms end when the industries being artificially stimulated by the inflation find it increasingly difficult to buy the inputs they need at prices that are profitable and also find it increasingly difficult to find buyers for their outputs. In late 2006, housing prices topped out and began to fall as glutted markets and higher input prices due to the previous years’ race to build began to take their toll.

Falling housing prices had two major consequences for the economy. First, many homeowners found themselves in trouble with their mortgages. The low- or no-equity mortgages that had enabled so many to buy homes on the premise that prices would keep rising now came back to bite them. The falling value of their homes meant they owed more than the homes were worth. This problem was compounded in some cases by adjustable rate mortgages with low “teaser” rates for the first few years that then jumped back to market rates. Many of these mortgages were on houses that people hoped to “flip” for an investment profit, rather than on primary residences. Borrowers could afford the lower teaser payments because they believed they could recoup those costs on the gain in value. But with the collapse of housing prices underway, these homes could not be sold for a profit and when the rates adjusted, many owners could no longer afford the payments. Foreclosures soared.

Second, with housing prices falling and foreclosures rising, the stream of payments coming into those mortgage-backed securities began to dry up. Investors began to re-evaluate the quality of those securities. As it became clear that many of those securities were built upon mortgages with a rising rate of default and homes with falling values, the market value of those securities began to fall. The investment banks that held large quantities of securities were forced to take significant paper losses. The losses on the securities meant huge losses for those that sold credit default swaps, especially AIG. With major investment banks writing down so many assets and so much uncertainty about the future of these firms and their industry, the flow of credit in these specific markets did indeed dry up. But these markets are only a small share of the whole commercial banking and finance sector. It remains a matter of much debate just how dire the crisis was come September. Even if it was real, however, the proper course of action was to allow those firms to fail and use standard bankruptcy procedures to restructure their balance sheets.

“I think this is a case where Fannie and Freddie are fundamentally sound, that they are not in danger of going under.” – Barney Frank, 2008

The Recession is the Recovery

The onset of the recession and its visible manifestations in rising unemployment and failing firms led many to call for a “recovery plan.” But it was a misguided attempt to “plan” the monetary system and the housing market that got us into trouble initially. Furthermore, recession is the process by which markets recover. When one builds a 70-story skyscraper on a foundation made for a small cottage, the building should come down. There is no use in erecting an elaborate system of struts and supports to keep the unsafe structure aloft. Unfortunately, once the weaknesses in the U.S. economic structure were exposed, that is exactly what the Federal government set about doing.

One of the major problems with the government’s response to the crisis has been the failure to understand that the bust phase is actually the correction of previous errors. When firms fail and workers are laid off, when banks reconsider the standards by which they make loans, when firms start (accurately) recording bad investments as losses, the economy is actually correcting for previous mistakes. It may be tempting to try to keep workers in the boom industries or to maintain investment positions, but the economy needs to shift its focus. Corrections must be permitted to take their course. Otherwise, we set ourselves up for more painful downturns down the road. (Remember, the 2008 crisis came about because the Federal Reserve did not want the economy to go through the painful process of reordering itself following the collapse of the dot.com bubble.) Capital and labor must be reallocated, expectations must adjust, and the economic system must accommodate the existing preferences of consumers and the real resource constraints that producers face. These adjustments are not pleasant; they are in fact often extremely painful to the individuals who must make them, but they are also essential to getting the system back on track.

When government takes steps to prevent the adjustment, it only prolongs and retards the correction process. Government policies of easy credit produce the boom. Government policies designed to prevent the bust have the potential to transform a market correction into a full-blown economic crisis.

No one wants to see the family business fail, or neighbors lose their jobs, or charitable groups stretched beyond capacity. But in a market economy, bankruptcy and liquidation are two of the primary mechanisms by which resources are reallocated to correct for previous errors in decision-making. As Lionel Robbins wrote in The Great Depression, “If bankruptcy and liquidation can be avoided by sound financing nobody would be against such measures. All that is contended is that when the extent of mal- investment and over indebtedness has passed a certain limit, measures which postpone liquidation only tend to make matters worse.”

Seeing the recession as a recovery process also implies that what looks like bad news is often necessary medicine. For example, news of slackening home sales, or falling new housing starts, or losses of jobs in the financial sector are reported as bad news. In fact, this is a necessary part of recovery, as these data are evidence of the market correcting the mistakes of the boom. We built too many houses and we had too many resources devoted to financial instruments that resulted from that housing boom. Getting the economy right again requires that resources move away from those industries and into new areas. Politicians often claim they know where resources should be allocated, but the Great Recession of 2008 is only the latest proof they really don’t.

The Bush administration made matters worse by bailing out Bear Sterns in the spring of 2008. This sent a clear signal to financial firms that they might not have to pay the price for their mistakes. Then after that zig, the administration zagged when it let Lehman Brothers fail. There are those who argue that allowing Lehman to fail precipitated the crisis. We would argue that the Lehman failure was a symptom of the real problems that we have already outlined. Having set up the expectations that failing firms would get bailed out, the federal government’s refusal to bail out Lehman confused and surprised investors, leading many to withdraw from the market. Their reaction is not the necessary consequence of letting large firms fail, rather it was the result of confusing and conflicting government policies. The tremendous uncertainty created by the Administration’s arbitrary and unpredictable shifts – most notably Bernanke and Paulson’s September 23, 2008 unconvincing testimony on the details of the Troubled Asset Relief program – was the proximate cause of the investor withdrawals that prompted the massive bailouts that came in the fall, including those of Fannie Mae and Freddie Mac.

The Bush bailout program was problematic in at least two ways. First, the rationale for such aggressive government action, including the Fed’s injection of billions of dollars in new reserves, was that credit markets had frozen up and no lending was taking place. Several observers at the time called this claim into question, pointing out that aggregate new lending numbers, while growing much more slowly than in the months prior, had not dropped to zero.

Markets in which the major investment banks operated had indeed slowed to a crawl, both because many of their housing-related holdings were being revealed as mal-investments and because the inconsistent political reactions were creating much uncertainty. The regular commercial banking sector, however, was by and large continuing to lend at prior levels.

More important is this fact: the various bailout programs prolonged the persistence of the very errors that were in the process of being corrected! Bailing out firms that are suffering major losses because of errant investments simply prolongs the mal-investments and prevents the necessary reallocation of resources.

The Obama administration’s nearly $800 billion stimulus package in February of 2009 was also predicated on false premises about the nature of recession and recovery. In fact, these were the same false premises which informed the much-maligned Bush Administration approach to the crisis. The official justification for the stimulus was that only a “jolt” of government spending could revive the economy.

The fallacy of job creation by government was first exposed by the French economist Bastiat in the 19th century with his story of the broken window. Imagine a young boy throws a rock through a window, breaking it. The townspeople gather and bemoan the loss to the store owner. But eventually one notes that it means more business for the glazier. And another observes that the glazier will then have money to spend on new shoes. And then the shoe seller will have money to spend on a new suit. Soon, the crowd convinces them-selves that the broken window is actually quite a good thing.

The fallacy, of course, is that if the window was never broken, the store owner would still have a functioning window and could spend the money on something else, such as new stock for his store. All the breaking of the window does is force the store owner to spend money he wouldn’t have had to spend if the window had been left intact. There is no net gain in wealth here. If there was, why wouldn’t we recommend urban riots as an economic recovery program?

When government attempts to “create” a job, it is not unlike a vandal who “creates” work for a glazier. There are only three ways for a government to acquire resources: it can tax, it can borrow or it can print money (inflate). No matter what method is used to acquire the resources, the money that government spends on any stimulus must come out of the private sector. If it is through taxes, it is obvious that the private sector has less to spend, leading to losses that at least cancel out any jobs created by government. If it is through borrowing, that lowers the savings available to the private sector (and raises interest rates in the process), reducing the amount the sector can borrow and the jobs it can create. If it is through printing money, it reduces the purchasing power of private sector incomes and savings. When we add to this the general inefficiency of the heavily politicized public sector, it is quite probable that government spending programs will cost more jobs in the private sector than they create.

“This [Government Sponsored Housing] is one of the great success stories of all time…” – Chris Dodd, 2004

The Japanese experience during the 1990s is telling. Following the collapse of their own real estate bubble, Japan’s government launched an aggressive effort to prop up the economy. Between 1992 and 1995, Japan passed six separate spending programs totaling 65.5 trillion yen. But they kept increasing the ante. In April of 1998, they passed a 16.7 trillion yen stimulus package. In November of that year, it was an additional 23.9 trillion. Then there was an 18 trillion yen package in 1999 and an 11 trillion yen package in 2000. In all, the Japanese government passed 10 (!) different fiscal “stimulus” packages, totaling more than 100 trillion yen. Despite all of these efforts, the Japanese economy still languishes. Today, Japan’s debt-to-GDP ratio is one of the highest in the industrialized world, with nothing to show for it. This is not a model we should want to imitate.

It is also the same mistake the United States made in the Great Depression, when both the Hoover and Roosevelt Administrations attempted to fight the deepening recession by making extensive use of the federal government and only made matters worse. In addition to the errors made by the Federal Reserve System that exacerbated the downturn that it created with inflationary policies in the 1920s, Hoover himself tried to prevent a necessary fall in wages by convincing major industrialists to not cut wages, as well as proposing significant increases in public works and, eventually, a tax increase. All of these worsened the depression.

Roosevelt’s New Deal continued this set of policy errors. Despite claims during the current recession that the New Deal saved us from economic disaster, recent scholarship has solidly affirmed that the New Deal didn’t save the economy. Policies such as the Agricultural Adjustment Act and the National Industrial Recovery Act only interfered with the market’s attempts to adjust and recover, prolonging the crisis. Later policies scared off private investors as they were uncertain about how much and in what ways government would step in next. The result was that six years into the New Deal, unemployment rates were still above 17% and GDP per capita was still well below its long-run trend.

In more recent years, President Nixon’s attempt to fight the stagflation of the early 1970s with wage and price controls was abandoned quickly when they did nothing to help reduce inflation or unemployment. Most telling for our case was the fact that the Fed’s expansionary policies earlier this decade were intended to “soften the blow” of the dot.com bust in 2001. Of course those policies gave us the inflationary boom that produced the crisis that began in 2008. If the current recession lingers or becomes a second Great Depression, it will not be because of problems inherent in markets, but because the political response to a politically generated boom and bust has prevented the error-correction process from doing its job. The belief that large-scale government intervention is the key to getting us out of a recession is a myth disproven by both history and recent events.

The Future That Awaits Our Children

Commentators have had a field day adding up the trillions of dollars that have been committed in the Bush bailout, the Obama stimulus, and the administration’s proposed budget for 2010. The explosion of spending and debt, whatever the final tab, is unprecedented by any measure. It will “crowd out” a significant portion of private investment, reducing growth rates and wages in the future. We are, in effect, reducing the income of our children tomorrow to pay for the bills of today and yesterday. Large government debt is also a temptation for inflation. In order for governments to borrow, someone must be willing to buy their bonds. Should confidence in a government fall enough (China, notably, has expressed some reluctance to continue buying our debt), it is possible that buyers will be hard to come by. That puts pressure on the government’s monetary authorities to “lubricate” the system by creating new money and credit from thin air.

So, even if the economy gets a lift in the near-term from either its own corrective mechanisms or from the government’s reinflation of money and credit, we have not recovered from the hangover. More of what caused the Great Recession of 2008 – easy money, regulatory interventions to direct capital in unsustainable directions, politicians and policy-makers rigging financial markets – is not likely to produce anything but the same outcome; asset price inflation and an eventual “adjustment” we call a recession or depression. Along the way, we will accumulate monumental debts which accentuate the future downturn and saddle us with new burdens.

Unless we can begin to undo the mistakes of the last decade or more, the future that awaits our children will be one that is poorer and less free than it should have been. With politicians mortgaging future generations to the tune of trillions, running and subsidizing auto and insurance companies, spending blindly and printing money hand- over-fist – all while blaming free enterprise for their own errors, we have a great deal to learn.

As Albert Einstein famously said, doing the same thing over and over again and expecting different results is the definition of insanity. The best we can hope for is that we learn the right lessons from this crisis. We cannot afford to repeat the wrong ones.

“The basic point is that the recession of 2001 wasn’t a typical postwar slump…. To fight this recession the Fed needs more than a snapback… Alan Greenspan needs to create a housing bubble to replace the Nasdaq bubble.” – Paul Krugman, 2002

Appendix A: The Myth of Deregulation

Appendix B: Government Interventions During Crisis Create Uncertainty

Appendix C: Suggested Readings

Cole, Harold and Lee E. Ohanian. 2004 New Deal Policies and the Persistence of the Great Depression: A General Equilibrium Analysis, Journal of Political Economy 112: 779-816.

Friedman, Jeffrey. 2009. A Crisis of Politics, Not Economics: Complexity, Ignorance, and Policy Failure, Critical Review 21: 127-183.

Higgs, Robert. 2008. Credit Is Flowing, Sky Is Not Falling, Don’t Panic, The Beacon, available at http://www.independent.org/blog/?p=201.

Marenzi, Octavio. 2008. Flawed Assumptions about the Credit Crisis: A Critical Examination of US Policymakers, Celent Research, available at http://www.celent.com/124_347.htm

Prescott, Edward and Timothy J. Kehoe (Editors). 2007. Great Depressions of the Twentieth Century, Minneapolis. Federal Reserve Bank of Minneapolis.

Taylor, John. 2009. Getting Off Track: How Government Actions and Interventions Caused, Prolonged, and Worsened the Financial Crisis, Stanford, CA: Hoover Institution Press.

Woods, Thomas. 2009. Meltdown: A Free-Market Look at Why the Stock Market Collapsed, the Economy Tanked, and Government Bailouts Will Make Things Worse, Washington, DC: Regnery.

Biographies

Lawrence W. Reed is president of the Foundation for Economic Education – www.fee.org – and president emeritus of the Mackinac Center for Public Policy.

Steven Horwitz is the Charles A. Dana Professor of Economics at St. Lawrence University in Canton, NY. He has been a visiting scholar at Bowling Green State University and the Mercatus Center at George Mason University.

Peter J. Boettke is the Deputy Director of the James M. Buchanan Center for Political Economy, a Senior Research Fellow at the Mercatus Center, and a professor in the economics department at George Mason University.

John Allison served as the Chief Executive Officer of BB&T Corp. until December 2008. Mr Allison has been the Chairman of BB&T Corp., since July 1989. He serves as a Member of American Bankers Association and The Financial Services Roundtable.

pdf file: HouseUncleSamBuiltBooklet (1085597 bytes)

Peter J. Boettke

Peter J. Boettke

Peter Boettke is a Professor of Economics and Philosophy at George Mason University and director of the F.A. Hayek Program for Advanced Study in Philosophy, Politics, and Economics at the Mercatus Center. He is a member of the FEE Faculty Network.

RELATED ARTICLE: Housing Policies That Led to 2008 Collapse Still in Place, Says Freddie Mac Economist – PJ Meda June, 2017